New SE CASC Supported Publication Uses Eye-Tracking Technology to Evaluate a Web-Based Climate Decision Support System

Spring 2018 Global Change Fellow, Lindsay Maudlin is the lead author on a new publication titled, “Website Usability Differences between Males and Females: An Eye-Tracking Evaluation of a Climate Decision Support System,” recently published in the journal Weather Climate and Society. This publication also includes the work of SE CASC PI, Karen S. McNeal, USGS Deputy Director, Ryan Boyles, 2015 – 2016 Global Change Fellow, Rachel Atkins, along with Heather Dinon-Aldridge and Corey Davis from the State Climate Office of NC. This work was supported through the SE CASC Global Change Fellows Program. The following summary was written by Spring 2018 Global Change Fellow, Lindsay Maudlin.

![]()

As scientists, we put a lot of effort into generating information and communicating that information. For many of us, our efforts result in publications ultimately read by experts in our fields. For others, the end result might be a decision support tool used by stakeholders to make climate-related decisions. In either case, we want to know our information is communicated clearly to, is useful for, and is easily understood by our audience. The only way we can guarantee or know the efficacy of our end results is through the important but often overlooked process of evaluation. By asking the right questions, implementing a specific protocol, and using valid and reliable methods, we can gauge how well the results are received by our intended audience.

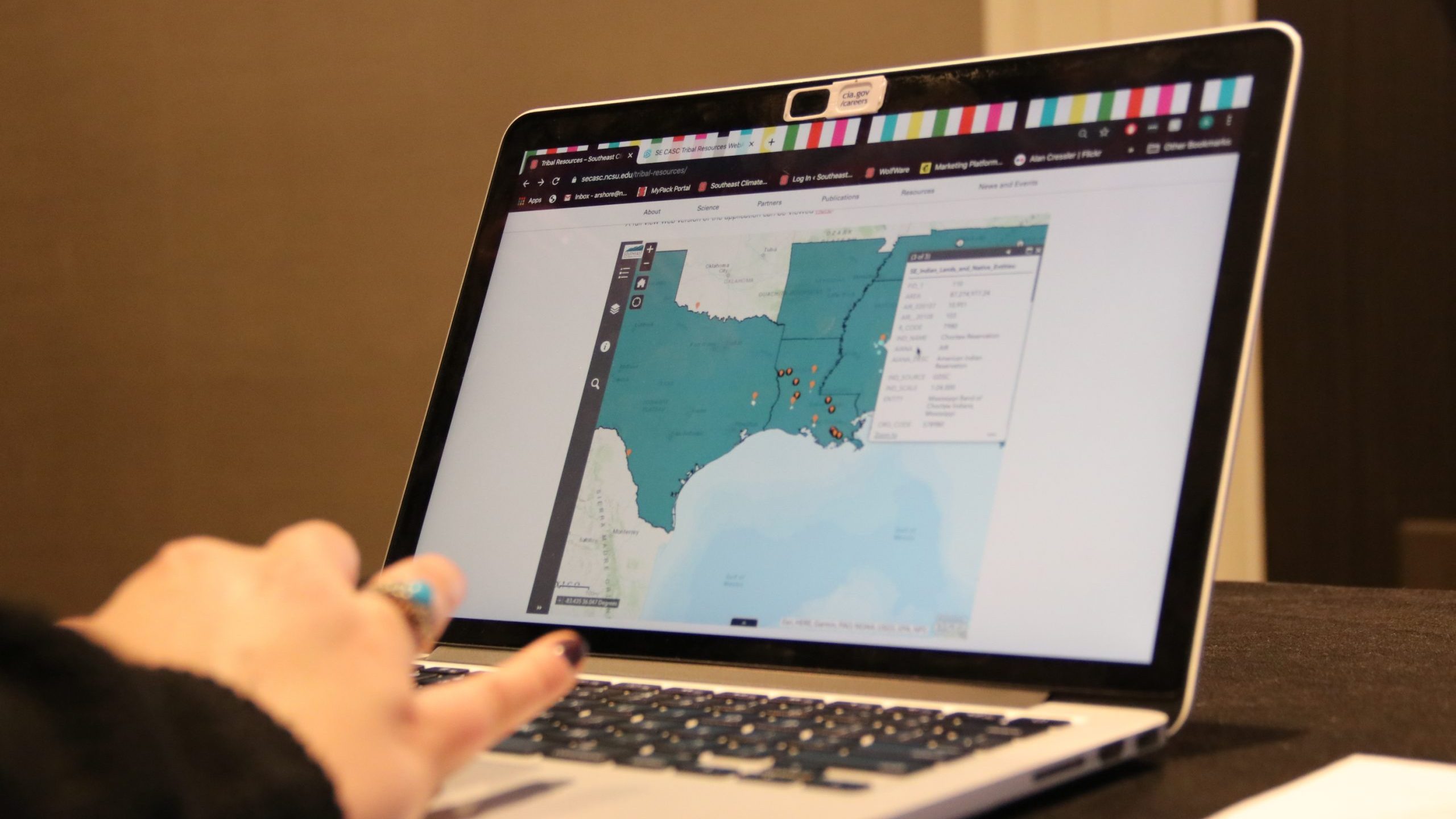

In this study, a web-based climate decision support system, the Pine Integrated Network: Education, Mitigation, and Adaptation Project Decision Support System (PINEMAP DSS), was evaluated. The PINEMAP DSS is a suite of tools developed by the State Climate Office of North Carolina for foresters in the southeastern United States as they make decisions regarding loblolly pine forests in the region. In addition to measuring the overall usability of the PINEMAP DSS, we were interested in studying whether end-user characteristics such as age, gender, education level, and experience influenced usability.

To do this, we employed a mixed methods approach, which used both quantitative and qualitative data, and a study design meant to guide study participants through a process modeled after the steps foresters would take while using the PINEMAP DSS to make an actual decision. Our quantitative data set included data collected using eye tracking, a technology that tells us when, where, and for how long a person looks at information on a computer screen. Eye tracking of multiple individuals can help us identify common patterns and search behaviors among study participants working through the same process. Once common patterns and search behaviors were identified, qualitative data were used to support or explain those findings.

This article describes the results related to usability differences between genders, and an upcoming article will focus on the findings related to end-user age, education level, and experience. In general, the differences we observed between males and females in this study centered around how and where they focused their visual attention within the PINEMAP DSS. For example, males tended to focus the majority of their visual attention on the map-based climate information while females tended to focus the majority of their visual attention on other PINEMAP DSS features such as informative text, menu options, and aspects of the tools that could be toggled off and on.

Ultimately these generalized patterns and search behaviors influenced performance on a series of multiple-choice questions about the content they interacted with. Males outperformed females on these multiple-choice questions, not because males are more capable of answering these questions, but because males had focused their visual attention on the information most relevant to answering the questions correctly.

In addition to helping determine whether end-user characteristics influenced usability, our results were able to inform the PINEMAP DSS developers of website features that repeatedly served as stumbling blocks to study participants. These features were updated to become more user-friendly and visible. An added bonus of evaluations, therefore, is the opportunity to find stumbling blocks and suggest changes to improve the overall clarity, usefulness, and understandability of our information.

An intense evaluation using eye tracking is not necessary for every science communication endeavor, but giving some thought to our audiences and their backgrounds can go a long way. The same rule many of us were taught in Communications 101 class – “know your audience” – rings true here, too. Some helpful questions to ask ourselves include: 1. Who does my audience include? 2. What background information do I expect them to already know? and 3. What kinds of experiences do they bring with them that might influence how they interpret my information? Anticipating potential stumbling blocks or differences between groups of end-users, and taking steps to mitigate those issues, can also go a long way. One helpful tip is to draw audience attention to the most important aspects of your figures. Little edits such as bold text, in-text cues referencing aspects of a related figure, arrows pointing to important data points, or highlighted critical features can help offset any potential differences between groups of end-users.

Maudlin, L.C., K.S. McNeal, H. Dinon-Aldridge, C. Davis, R. Boyles, and R.M. Atkins, 2020: Website Usability Differences between Males and Females: An Eye-Tracking Evaluation of a Climate Decision Support System. Wea. Climate Soc., 12, 183–192, https://doi.org/10.1175/WCAS-D-18-0127.1

Journal Abstract: Decision support systems—collections of related information located in a central place to be used for decision-making—can be used as platforms from which climate information can be shared with decision-makers. Unfortunately, these tools are not often evaluated, meaning developers do not know how useful or usable their products are. In this study, a web-based climate decision support system (DSS) for foresters in the southeastern United States was evaluated by using eye-tracking technology. The initial study design was exploratory and focused on assessing usability concerns within the website. Results showed differences between male and female forestry experts in their eye-tracking behavior and in their success with completing tasks and answering questions related to the climate information presented in the DSS. A follow-up study, using undergraduate students from a large university in the southeastern United States, aimed to determine whether similar gender differences existed and could be detected and, if so, whether the cause(s) could be determined. The second evaluation, similar to the first, showed that males and females focused their attention on different aspects of the website; males focused more on the maps depicting climate information while females focused more on other aspects of the website (e.g., text, search bars, and color bars). DSS developers should consider the possibility of gender differences when designing a web-based DSS and include website features that draw user attention to important DSS elements to effectively support various populations of users.

- Categories: